UASOL

A Large-scale High-resolution Outdoor Stereo Dataset

Authors: Zuria Bauer, Francisco Gomez Donoso, Edmanuel Cruz, Sergio Orts-Escolano and Miguel Cazorla,

Journal: Scientific Data - Nature Publishing Group (2019)

Abstract

We propose a new dataset for outdoor depth estimation from single and stereo RGB images. The dataset was acquired from the point of view of a pedestrian. Currently, the most novel approaches take advantage of deep learning-based techniques, which have proven to outperform traditional state-of-the-art computer vision methods. Nonetheless, these methods require large amounts of reliable ground-truth data. Despite there already existing several datasets that could be used for depth estimation, almost none of them are outdoor-oriented from an egocentric point of view. Our dataset introduces a large number of high-definition pairs of color frames and corresponding depth maps from a human perspective. In addition, the proposed dataset also features human interaction and great variability of data, as shown in this work.

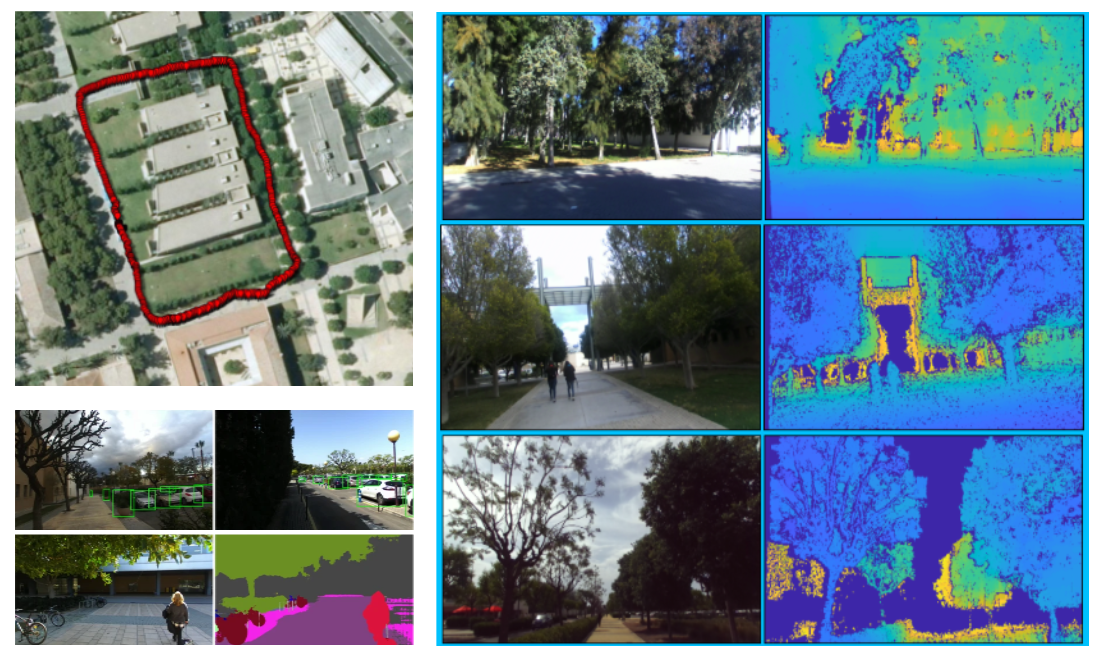

General overview of the dataset.

Below, we present some samples of the data provided by UASOL. E.g: visualization of the GPS tags, RGB-D Samples and, lastly, the object counting procedure and the semantic variability used for the Technical Validation of the data.

BibTex

-

@article{bauer2019uasol,

title={UASOL, a large-scale high-resolution outdoor stereo dataset},

author={Bauer, Zuria and Gomez-Donoso, Francisco and Cruz, Edmanuel and Orts-Escolano, Sergio and Cazorla, Miguel},

journal={Scientific data},

volume={6},

number={1},

pages={1--14},

year={2019},

publisher={Nature Publishing Group}

}